Luminous Flow Start 217-525-5894 Shaping Reliable Lookup Results

Luminous Flow Start 217-525-5894 frames a disciplined approach to reliable lookups in noisy data. The method emphasizes explicit similarity thresholds, robust normalization, and provenance-aware filtering. It balances speed with accuracy through dimensionality control and context-aware matching. Governance and continuous evaluation guide adjustments, ensuring auditable results under evolving schemas. The framework invites scrutiny of trade-offs and verification mechanisms, leaving a path forward that prompts further examination.

How to Define Reliable Lookup in Noisy Datasets

Defining reliable lookup in noisy datasets requires a structured, criterion-driven approach. The analysis centers on objective metrics and repeatable protocols, ensuring consistent outcomes across varying conditions. Reliable matching emerges from clear similarity thresholds and dimensionality control, while noise mitigation prioritizes signal preservation over aggressive removal. Documentation emphasizes provenance, validation, and auditability, enabling informed adjustments without compromising interpretability or scalability.

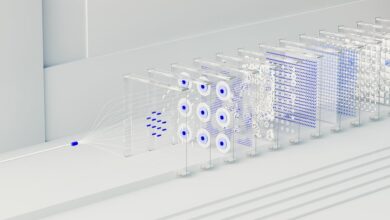

Building a Robust Lookup Architecture for Speed and Accuracy

A robust lookup architecture integrates structured data representations, efficient retrieval mechanisms, and rigorous validation to balance speed with accuracy. It emphasizes data integrity, modular components, and scalable storage. Noise resilience is strengthened through robust filtering and validation rules. Performance tuning targets latency and throughput, while error handling provides graceful degradation and clear diagnostics, ensuring reliable results under diverse, evolving conditions.

Normalization, Context, and Error Handling in Lookups

How should normalization, context, and error handling shape lookups to ensure consistent results across varying inputs? The analysis treats normalization as data normalization, reducing variance from heterogeneous sources, while context aligns fields and Schemas to anticipated semantics. Noise handling mitigates anomalies; explicit error handling returns graceful, deterministic outcomes. This disciplined approach promotes stable, predictable results across diverse, real-world datasets.

Evaluation, Monitoring, and Continuous Improvement of Results

Evaluation, monitoring, and continuous improvement of results follow from the established normalization, context alignment, and error-handling framework. The process measures data governance adherence, detects model drift, and quantifies accuracy over time. Systematic feedback informs parameter tuning, auditing, and governance updates. Transparent metrics enable disciplined adaptation, ensuring reliability while preserving freedom to evolve methods without compromising accountability or ethical considerations.

Conclusion

In summary, the approach quietly fosters dependable lookups amid noise by smoothing discrepancies and preserving essential signals. It achieves balance through deliberate normalization, contextual anchoring, and careful error handling, all while retaining traceable provenance. The architecture fosters graceful degradation rather than brittle failure, guiding ongoing tuning with transparent metrics. In this measured frame, improvements drift in like a calm current—subtle, persistent, and ultimately steering results toward clearer alignment with evolving data realities.